What is Docker?

Working with microservice is a bit complex. The ease of working comes with Docker. It is a container service that helps to create an environment in a container and makes it easy to link together independent and small services. It speeds up the development process and since you can replicate your production environment locally it helps to eliminate environment-specific bugs with ease.

Let’s understand a few important terms related to Docker that you should know.

What is Docker Image?

Docker Images are the building blocks of Docker Container, or we can say they act like a template used for creation of Docker Containers. These Docker Images are read only templates created using the build command, which are used to create containers.

What is Docker Container?

Docker Container is an executable package of software, a running instance of Docker Image, which is needed to run any application. This software package or container includes everything from code, system tools & libraries, runtime to computing environment.

What is Docker Registry?

Docker Registry is a repository where Docker Images are stored, it can be a local or a public repository. You can use Docker’s cloud repository, called as Docker Hub, which allows collaborate of multiple users in building an application. Docker Containers can be easily shared by multiple teams within an organization, by uploading them to the Docker Hub.

In this blog post we will discuss how you can deploy ReactJs with Docker. We have divided the process into stages to make it easy to understand.

How to Deploy ReactJs with Docker?

To deploy ReactJs with Docker we will assume here that we have already created an application with the name react-demo-app. This application is simple web project with static files like, javascript, CSS and few images. All it will need is a web server like Nginx or Apache at runtime.

Docker Multi-Stage Build

Docker has introduced a feature with the name ‘multi-stage build’ in its version 17.05. This newly introduced feature allows you to use multiple FROM statements in your Dockerfile. Every single FROM statement uses a different base and begins a new stage of the build. You can copy artifacts of your choice from one stage to another leaving behind those that you don’t need in the final image. This will provide you with a lean image.

Now we are ready to deploy React Js with Docker. To make it simple to understand, let’s divide it into two stages:

Stage 1: Build:

For now, we have a node.js base image that we will extend and label, I am labeling it as “build”. You can use a name of your choice.

![]()

The next step is to make a directory and set it as a working directory for our app.

Now we will copy package.json file of our project to take advantage of Docker’s cache layers and expose all Node.js binaries to our PATH environment variable. Then we will install all our dependencies by running npm install.

Finally, we will setup react-scripts by copying our app sources and building it with npm run build command. Doing this will make our app ready for production.

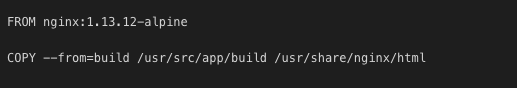

Stage 2: Production:

We need to run the app in a web server, so we start by extending the Nginx base image from hub.docker.com (an official Nginx repository). This Nginx image is based on Alpine Linux which leads to the slimmer images of size small (~5MB).

Now we can copy the contents of our build directory to the default Nginx directory. The result from first stage can be referred here by the label we provided “build”.

We need to expose port 80 as Nginx will serve on this port. Later we will have to map this port on the host running the container. Below is the command to expose the port:

EXPOSE 80

Now, we have to define a default command that will be run by Docker when executing the container. We tell the docker to start the server and direct it not to run as a daemon.

Build and Run Docker Image

After completing the above steps we can now build and tag the image with the command mentioned below within the project directory.

![]()

In order to run the freshly created image we will use the docker run command. By doing this, we tell the docker to run the container detached in the background and finally map the internal port (port 80) to Nginx server port (port 3000) of the host system where our Docker instance is running.

With the Docker’s multi-stage feature we can easily manage to separate build and runtime environment. Below is the complete code for the steps mentioned above.

The Bottom Line

By using multi-stage features of Docker we easily managed to separate the build and runtime environment. It also results into quicker deployments and an improved containerization in the production.

Stay tuned for more such reads!

Loading...